Data Compression - a short backgrounder

Data compression is the process of encoding information using fewer bits than

the original. Compression techniques can be used to increase the quantity of data

which may be stored on media which otherwise might be too restricted in capacity.

Similarly, network speed may be apparently increased using compression to reduce

the quantity of data being transferred.

Some schemes are completely reversible so that the original data can be accurately

reconstructed (lossless data compression), while others accept some loss of data in

order to achieve higher compression (lossy data compression). The latter category

is appropriate for audio and video compression (eg. MP3 and MPEG), but here we

consider applications where compression must be lossless.

Compression algorithms may be designed to suit exactly any known characteristics of

the data, but what is often needed is a general purpose algorithm which extracts the

redundancy from the repetitive nature of certain computer files, especially those

containing program code and other humanly-readable text.

Lempel and Ziv

The Lempel-Ziv compression technique developed in 1977 is the basis for many popular

algorithms for lossless compression, and many of its derivatives are generally free

from patent claims.

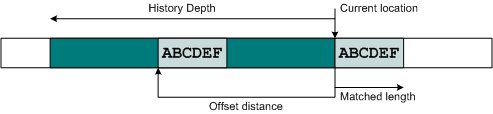

The LZ77 methods work by identifying repeated

strings, looking back (never forward) into a sliding window of history of the data.

The potential set of strings to be matched against is called the dictionary, which

is populated as the data arrives at the encoder. Different implementations may put

more or less effort into identifying such patterns, resulting in many distinct

compressed representations which expand back to the same original data.

An important parameter of the LZ77 algorithm is the amount of history which is

available to search. This is called the history depth: the size of the sliding

window looking backward from the current position. The larger the history, the

better the compression performance, with ever decreasing returns. Where possible,

data should be processed in as large a block as possible, and with as large

a history as possible, remembering that both the compressor and decompressor

must provide memory for this history.

The LZ77 methods differ mostly in how they encode the matched information.

For example the popular DEFLATE variant (which forms the basis of many commonly used

"zip" utilities, and the Zlib library) combines an enhanced LZ77 string matcher with

statically or dynamically chosen Huffmann codes for optimal performance. The LZMA

variant used in 7-Zip follows the LZ77 stage with Markov chain and range encoders.

Such state-of-the-art algorithms used on desktop computers require significant memory

resources and CPU power to achieve good results, and are not always practical for

hardware implementation.

LZRW Algorithms

One particular family of LZ77 derivatives is LZRW. These were developed by

Ross Williams around 1990 and are particularly amenable to high throughput

hardware implementation. Both the earlier LZRW1-A and the later LZRW3 use

the same hash table lookups to identify matches, and so provide (almost)

identical compression. However, LZRW1 transmits the match information as

offset/length pairs, whereas LZRW3 transmits hash/length pairs, allowing

greater history depths to be referenced at the expense of maintaining an

identical hash table at the decompressor.

Some small but important modifications are made to the original algorithms which

allow better hardware implementation. For both LZRW1 and LZRW3, a proper

sliding window (rolling history) can be used for longer blocksizes without

increasing the RAM utilisation significantly. This will be appropriate for

customers moving to larger frame sizes, or for storage applications which

use much larger data blocks.

The compression ratio (defined here as compressed length as a percentage of the

original length) is obviously highly dependent on the characteristics of the data

itself. The table below shows some average results for test files taken from the

standard Calgary and Canterbury datasets often used for comparison.

The three files chosen represent different corners of the possible data space;

the "easy" file is a bitmap which compresses well, the "text" file contains much

humanly-readable redundancy like most email and HTML pages, and the "hard" file

contains database numbers with only a small amount of repetition.

For any given dataset, the compression ratio improves as the history increases,

but it is clear that the primary factor is the data itself. Note that the

compression ratio on standalone short frames will never be that impressive,

as there is simply not enough redundancy to extract!

| LZRW3 |

HISTORY DEPTH (Bytes) |

| DATASET (filename) |

2K1 |

4K |

8K2 |

16K2 |

| Easy (canterbury/ptt5) |

26.0% |

25.2% |

24.8% |

24.5% |

| Text (canterbury/asyoulik.txt) |

74.9% |

70.0% |

66.0% |

62.7% |

| Hard (calgary/geo) |

92.7% |

89.1% |

85.8% |

83.7% |

| Canterbury Average (11 files) |

50.7% |

48.1% |

45.8% |

43.7% |

1. LZRW1 performance for 2K is marginally better than LZRW3.

2. History depths >4K bytes are not supported by LZRW1.

For area and throughput metrics in popular FPGA technologies,

please see the LZRW Compression Core

and Compression System product pages.

Compression and Encryption

Data compression and encryption are often closely related within a system.

It is worth noting that data which has been encrypted will not compress effectively

due to the way in which encryption naturally removes any repeats or patterns from

the plain data. This means that when these two operations co-exist, the order in

which they are applied is very important, as is a full understanding of the relative

data throughputs through the compression function. Significant care must be taken

in the design of such a system to ensure the most effective use of the hardware.

Contact

For more detailed information on this or any of our other products and services,

please feel free to email us at

helioncores@heliontech.com and we will be pleased to discuss how we can assist

with your individual requirements.

|